Racing towards a Grand Theory of Consciousness

Originally published in Chinese in Scientific American China, March 2021 print issue. Revised and substantially expanded in English, 2026, with updated results from the COGITATE adversarial collaboration, the Koch–Chalmers bet, and the open letter controversy. The original 2021 article can be read here.

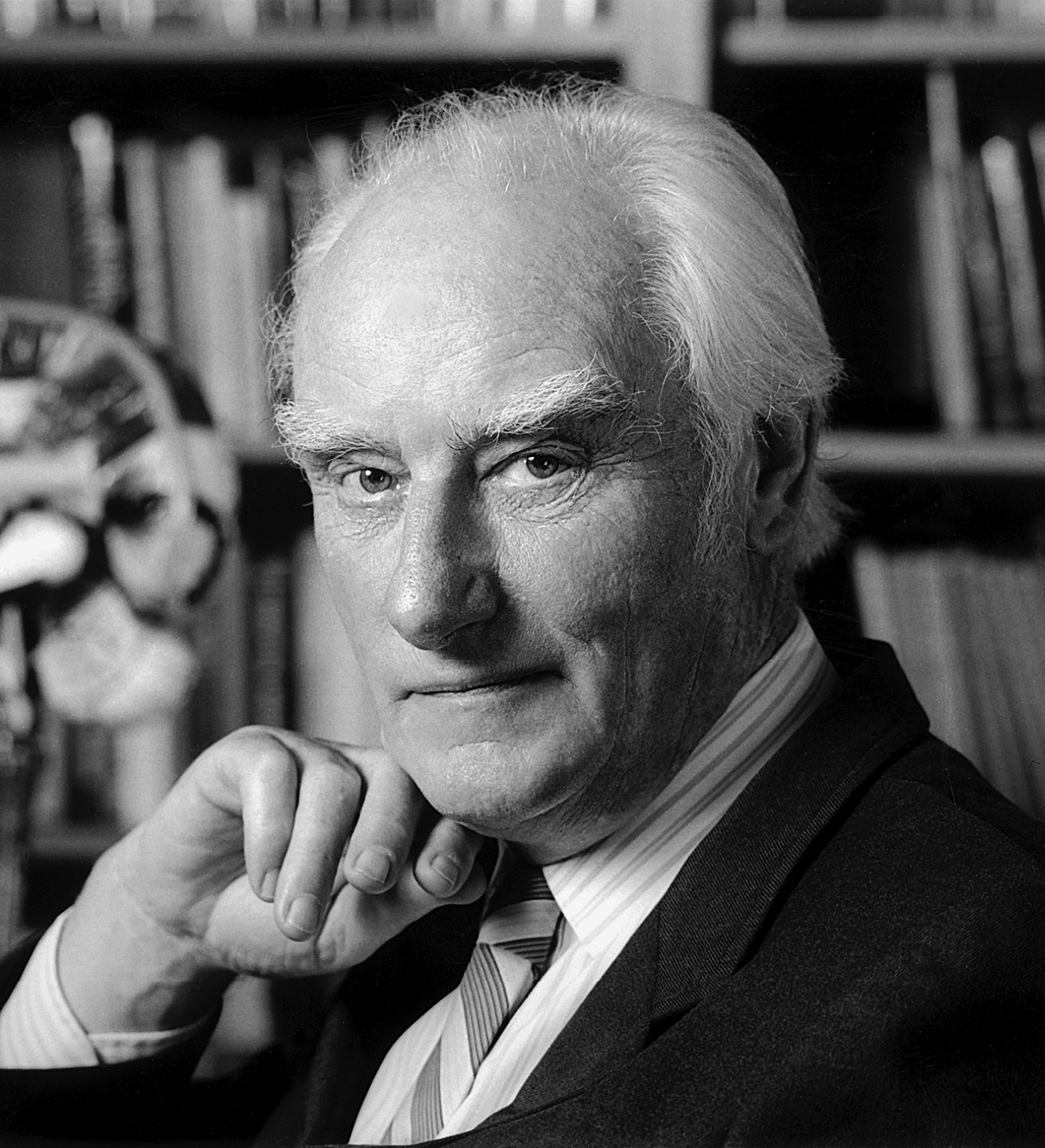

Francis Crick had already changed the world once. The double helix, the central dogma, the genetic code: by the late 1970s the revolution he had helped start was running on its own momentum. Molecular biology had become a field with a workforce, a budget, and problems that no longer required Crick’s particular gift for seeing through to the structure of things. He was bored.

So he turned, in stages, toward what he considered the largest unsolved problem in biology: consciousness. In 1976 he moved from Cambridge to the Salk Institute in La Jolla, where the Pacific light and the Louis Kahn courtyard framed an intellectual freedom that the Medical Research Council had never quite offered. The early years were exploratory: he read psychology, attended seminars in neuroscience, began thinking about visual attention. Then, in the late 1980s, a young Caltech neuroscientist named Christof Koch started joining Crick for Friday afternoon conversations in his office at Salk. Koch was a physicist by training, an engineer by temperament, and a systems neuroscientist by ambition. Crick was seventy-three and still the sharpest presence in the room.

Their conversations, sustained over nearly two decades until Crick’s death in 2004, produced a programme that reshaped the field. In a 1990 paper in Seminars in the Neurosciences, Crick and Koch argued that the problem of consciousness should be attacked not philosophically but neurobiologically: find the minimal set of neural mechanisms sufficient for any one conscious experience, the ‘neural correlates of consciousness’ (NCC). ‘We believe the time is now ripe for an attack on the neural basis of consciousness,’ they wrote. The language was military, the ambition enormous. They were not asking what consciousness is in some final philosophical sense. They were asking what it does in the brain, and where.

Koch often returned to the problem of solipsism: can I ever truly know whether another person is conscious, or am I a mind trapped inside a skull? The NCC programme offered an empirical foothold. If one could identify the neural signature that separates a conscious perception from an unconscious one (the specific circuit, the specific firing pattern, the specific timing), then consciousness would cease to be purely a philosopher’s problem and become measurable. The skull prison would have a window.

The skull prison would have a window.

Three decades later, no such mechanism has been found. Multiple competing theories claim to have identified it, or at least the right place to look. Large-scale adversarial collaborations have been built to test them against each other. And in June 2023, in a lecture hall in New York, Koch conceded that he had lost a twenty-five-year bet about when the answer would arrive.

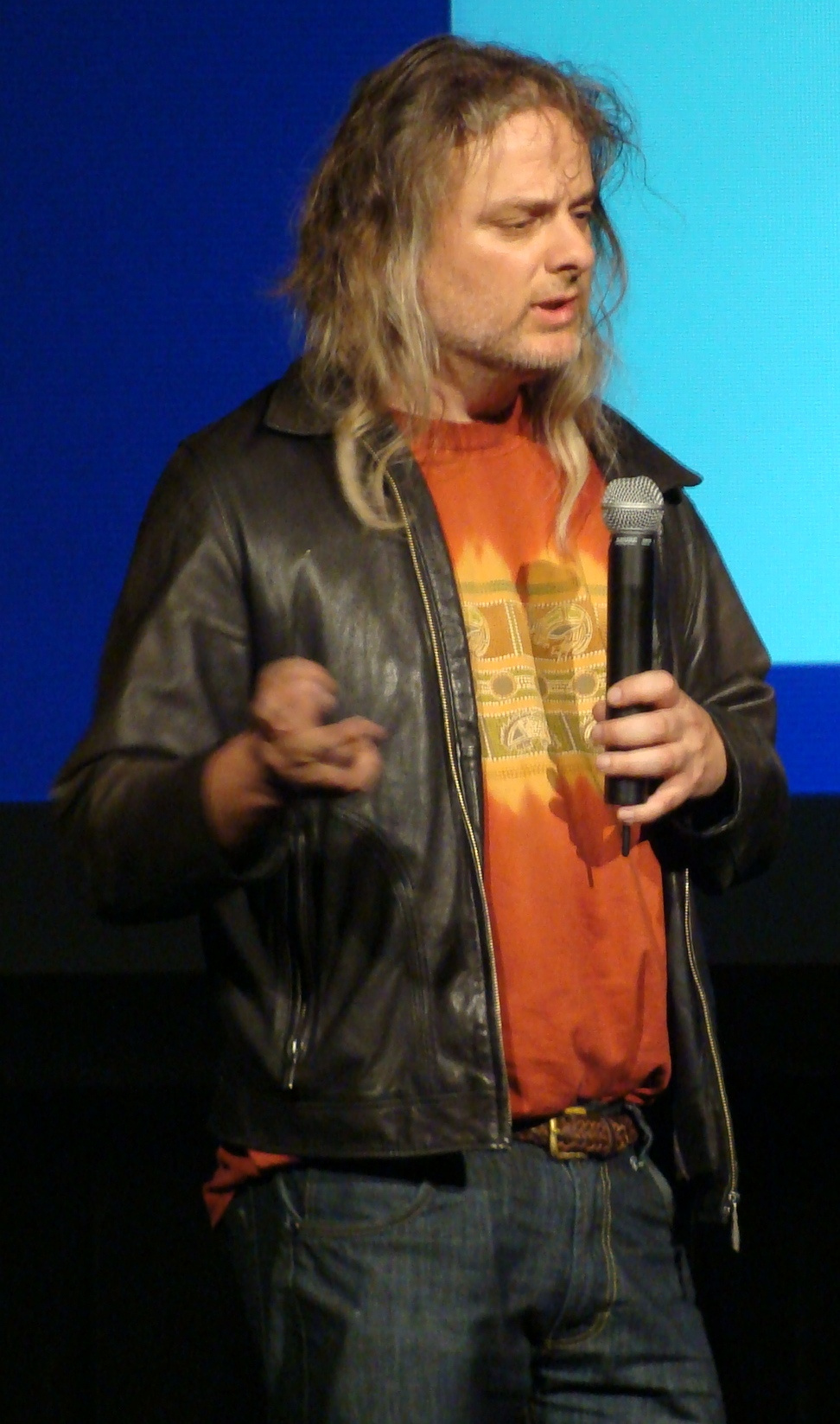

In April 1994, four years after Crick and Koch published their programme, a twenty-seven-year-old Australian philosopher named David Chalmers stood up at the University of Arizona’s ‘Toward a Science of Consciousness’ conference and named the problem that the NCC programme had not solved. Chalmers, who had arrived in Tucson with long hair and a leather jacket, looking less like a professional philosopher than a lead guitarist, drew a distinction that has since structured the entire debate.

The ‘easy problems’ of consciousness, Chalmers argued, are the functional ones: how the brain integrates information, how it directs attention, how it reports on its own internal states. These are hard engineering problems, but they are problems that neuroscience knows how to attack. The ‘Hard Problem’ is different. Even if one could explain every functional mechanism in the brain — every synapse, every circuit, every computation — a question would remain: why is there something it is like to undergo those processes? Why does the redness of red feel like anything at all? Why isn’t the whole system running in the dark?

Koch was in the audience. He asked Chalmers how one might bridge the gap between neural mechanism and subjective experience. Chalmers proposed that consciousness might originate in information itself, drawing on Claude Shannon’s information theory, developed in the 1940s, which had made it possible to treat information as a physical quantity. An object with a great deal of integrated information (a brain) would possess a great deal of consciousness; an object with very little (a thermostat) would possess only a trace.

The distinction between easy and Hard Problems has since become the field’s organising tension. One camp, broadly, holds that the Hard Problem is real — that no functional or mechanistic account of the brain will ever fully explain why subjective experience exists. The other camp, in the tradition of Daniel Dennett, holds that the Hard Problem is an illusion generated by the way the question is framed: solve all the easy problems and nothing will remain. Dennett, who died in April 2024 at the age of eighty-two, spent a career arguing that consciousness is not a mystery added on top of brain function but rather what brain function looks like from the inside. The debate did not resolve during his lifetime. It has not resolved since.

What it did was generate theories.

The most ambitious theory to grow from Chalmers’ information-based suggestion was Integrated Information Theory, or IIT, developed principally by Giulio Tononi, a psychiatrist and neuroscientist at the University of Wisconsin–Madison. Tononi’s path to IIT ran through sleep research. Studying the difference between the conscious waking brain and the unconscious sleeping brain had given him a precise empirical question: what is it about the way information is organised that makes one state conscious and the other not?

IIT’s answer is structural. The theory starts from five axioms about the properties of any conscious experience (it exists, it is structured, it is specific, it is unified, it is definite) and derives from them five corresponding postulates about the physical substrate that must produce such experience. The central claim is that consciousness is integrated information: a system is conscious to the degree that it integrates information in a way that cannot be reduced to the sum of its parts. That degree is measured by a quantity called phi (Φ). The higher a system’s phi, the more conscious it is.

The implications are radical. In a 2009 Scientific American article, Koch drew out the logic: if consciousness is identical with integrated information, then any physical system that integrates information (not just brains but potentially any sufficiently interconnected structure) possesses some degree of consciousness. ‘A proton, composed of just three quarks, can also integrate information and has phi.’ This is panpsychism: the view that consciousness is not the brain’s exclusive property but a fundamental feature of the physical world, present wherever information is integrated.

IIT’s defenders point to clinical evidence. Tononi’s group developed the Perturbational Complexity Index (PCI), a measure that uses transcranial magnetic stimulation to probe how much a brain’s response to a zap spreads and differentiates, a proxy for how much integrated information it generates. PCI has proven remarkably effective at distinguishing conscious from unconscious patients at the bedside, identifying covert signs of awareness in roughly a third of patients clinically diagnosed as vegetative. As a diagnostic tool, the principle behind IIT has already saved lives.

But as a theory of consciousness, IIT has drawn fierce criticism. Computing phi for even a small system is NP-hard, effectively impossible for any system of realistic size. The computer scientist Scott Aaronson published a 2014 critique arguing that IIT assigns high phi to trivially simple systems: a large grid of XOR gates, doing nothing interesting, would be maximally conscious according to the theory’s mathematics. Aaronson offered Tononi a ‘bullet hoagie with mustard’, his phrase for the unpalatable consequence that IIT forces one to swallow. Tononi responded by arguing that such grids lack the right causal structure, but the exchange exposed a tension between IIT’s mathematical elegance and its empirical tractability.

If consciousness is integrated information,

then anything that integrates information is conscious.

The deeper philosophical worry is simpler. Phi is a structural measure: it tells one how much information a system integrates, and IIT asserts that this quantity is consciousness. But no one has proposed a mechanism by which a particular value of phi gives rise to the felt quality of red, of pain, of a C-major chord. The theory names the quantity; it does not explain the experience. For some, this is the Hard Problem in a new coat.

The most prominent rival to IIT is Global Neuronal Workspace theory, or GNW. Its roots are older than IIT’s. In 1988, the psychologist Bernard Baars at the Wright Institute in California proposed Global Workspace Theory, borrowing a concept from artificial intelligence: specialised processing modules in the brain (vision, language, memory, motor planning) operate independently and in parallel, but when information needs to be shared across modules for a complex task, it is broadcast to a ‘global workspace’, a kind of cognitive blackboard visible to all.

Baars’ insight was that consciousness might be this broadcasting. The contents of the global workspace are precisely what one is conscious of at any given moment: the melody one is attending to, the face one is recognising, the sentence one is constructing. Everything else — the countless parallel computations humming along in specialised modules — remains unconscious. Consciousness, in this view, is not a substance or a property of matter. It is a mode of information processing: the moment when local signals go global.

The theory attracted the cognitive neuroscientist Stanislas Dehaene at the Collège de France, who, with colleagues including Jean-Pierre Changeux, proposed a neural mechanism for Baars’ workspace. In a 2001 paper in PNAS, they argued that long-range connections between prefrontal, parietal, and temporal cortex form the physical substrate of the global workspace. When sensory input is strong enough, it triggers an ‘ignition’ — a sudden, nonlinear amplification that broadcasts the signal across these long-range networks. Ignition is the neural signature of a percept becoming conscious. Below the ignition threshold, the same sensory input can be processed — one can respond to a subliminal stimulus, prime a motor response, even influence a later decision — but it never enters awareness.

Dehaene’s group has identified four signatures of consciousness: a late P3b wave in event-related potentials, a sudden nonlinear amplification of neural activity, late sustained activation in a network of prefrontal and parietal areas, and long-distance synchronisation between distant brain regions. These signatures have been replicated across modalities and paradigms, and they provide GNW with a degree of empirical specificity that few competing theories can match.

IIT and GNW make directly opposing predictions about where consciousness lives in the brain. IIT holds that the posterior cortex, where neural connectivity supports rich information integration, is the primary seat of consciousness. GNW holds that the prefrontal cortex, where long-range broadcasting and ignition occur, is critical. The two theories, as the neuroscientist Cyriel Pennartz pointed out in an interview for the original version of this article, generate a ‘differential hypothesis’: directly opposing predictions that can be tested experimentally. That possibility made adversarial collaboration attractive.

A third family of theories rejects the terms of the IIT–GNW debate entirely. Higher-Order Theories (HOT), developed most fully by the philosopher David Rosenthal at the City University of New York, start from a different question: what makes a mental state conscious rather than unconscious?

Rosenthal’s answer invokes the transitivity principle: a mental state is conscious when and only when the subject is aware of being in that state. Seeing red is a first-order mental state. Being aware that one is seeing red, having a thought about the seeing, is a higher-order state. Without the higher-order representation, the first-order state can still influence behaviour (one might dodge a red object reflexively) but it is not conscious. Consciousness, in this view, is not the processing itself but the monitoring of processing: cognition about cognition, metacognition.

This is not merely a philosophical distinction. Hakwan Lau, then at UCLA, and Joseph LeDoux at NYU have developed higher-order approaches with distinct neural predictions. Lau’s Perceptual Reality Monitoring model holds that the prefrontal cortex does not generate conscious content; it monitors lower-level representations and determines which ones ‘feel real’. LeDoux, a fear-conditioning researcher who converted to higher-order theory after a sabbatical at All Souls, Oxford, argues that subjective emotional experience requires a higher-order representation of the lower-order survival circuit. Fear, in his account, is not the amygdala firing; fear is the cortical monitoring system representing the amygdala’s output as ‘something I am feeling’.

HOT’s experimental evidence comes in part from dissociations between performance and confidence. In a study published in PNAS, participants were shown a target shape for just 33 milliseconds — too brief for confident perception. Researchers found that while accuracy on identifying the shape was comparable at short and long intervals, confidence that one had ‘truly seen’ the shape was significantly higher at longer intervals. Cognition, in other words, can proceed without metacognition. And the neural substrate of the metacognitive component was localised to the mid-dorsolateral prefrontal cortex, providing HOT with a candidate mechanism.

The deeper challenge facing HOT is the one facing all third-party theories in a crowded field: it can explain the transition from unconscious to conscious processing, but it has not yet provided a full account of why higher-order monitoring produces subjective experience rather than simply more sophisticated information routing.

The problem, by 2019, was that the theories had been arguing past each other for decades. IIT, GNW, HOT, and several others had each accumulated supporting evidence, refined their predictions, and built loyal research communities, but they had rarely been tested against each other using the same experimental paradigms, the same stimuli, the same participants. Each laboratory tested its own theory on its own terms. The field had grown rich in theories and poor in arbitration.

In 2019, the Templeton World Charity Foundation launched a $20 million initiative called Accelerating Research on Consciousness, or ARC. The concept was ‘adversarial collaboration’: bring proponents of competing theories to the same table, force them to preregister opposing predictions derived from their theories, then test those predictions using the same experimental design, the same data, and independent judges.

‘The days of the lone genius scientist solving big problems in the laboratory are over,’ said Dawid Potgieter, senior programme officer at the Templeton World Charity Foundation, in an interview. ARC would fund the experiments, fund replication studies, and make all data openly available. Theory proponents would meet in structured ‘adversarial seminars’, debate their disagreements, identify the predictions that most clearly separated their theories, and then go back to their laboratories to collect the data that would prove one of them wrong.

The ambition was considerable. If it worked, ARC would demonstrate not only which theory was closer to the truth but that the adversarial model itself was superior to the traditional publish-and-hope approach.

Not everyone was convinced. Anil Seth, a cognitive neuroscientist at the University of Sussex and an ARC participant, warned that the competing theories ‘have built too many different assumptions, have different levels of falsifiability, and are even trying to explain different things’. Rosenthal argued that the supposed ‘competition’ between IIT and GNW was partly illusory: ‘IIT addresses creature consciousness’ (whether a whole organism is conscious) ‘whereas GNW addresses state consciousness’ (what conditions a particular mental state must satisfy to be conscious). If the theories were not answering the same question, testing them against each other might produce a winner without producing understanding.

If the theories were not answering the same question,

testing them against each other might produce a winner

without producing understanding.

Lau was more pointed. Although he admired Koch, Lau wrote in an interview that Koch’s role chairing the adversarial seminars naturally troubled scientists supporting theories other than IIT. ‘His attitude toward IIT is certainly not neutral.’ Seminar decisions, Lau said, were ‘basically biased. We could indeed propose our own ideas, but ultimately, Tononi and Koch would directly veto proposals they disliked and suggest that everyone investigate the questions they had wanted to investigate all along.’ The level playing field, in practice, tilted.

Others considered these concerns overstated. Liu Ling, a postdoctoral researcher in Luo Huan’s laboratory at Peking University, which participated in ARC, said that ‘different theories do have their own definitions of consciousness, but they can find common ground to some extent’. Pennartz agreed: what supporters of different theories needed to do was identify ‘differential hypotheses’ — directly opposing predictions. ‘Is consciousness located in the prefrontal cortex or in the posterior cortex?’ was precisely such a hypothesis. The competition, Pennartz said, was real.

ARC launched five adversarial collaborations testing eight theories in total. The first and largest was COGITATE.

COGITATE — the Consciousness-Oriented Global Investigation of Awareness through Evidence — recruited 256 participants across six laboratories on three continents. Participants viewed visual stimuli while their brain activity was recorded using fMRI, MEG, and intracranial EEG. Before data collection began, proponents of IIT and GNW preregistered their predictions in detail, specifying not just which brain regions would be active during conscious perception but how that activity would unfold over time, and what patterns of connectivity would be present.

The results, published in Nature in April 2025, were neither a clean victory for one theory nor a simple refutation of the other.

IIT had predicted that conscious perception would be associated with sustained activity in the posterior cortex, with rich connectivity patterns among visual and temporal regions reflecting the integrated information structure that the theory considers identical with consciousness. Two of IIT’s three preregistered predictions were supported: consciousness was indeed more closely linked to posterior cortical activity than to prefrontal activity, and the sustained temporal profile predicted by IIT was observed. But the third prediction — that synchronised connectivity between early visual and mid-level visual areas would track consciousness — was not confirmed. The specific signature of information integration that IIT considers its deepest commitment was absent from the data.

GNW had predicted a rapid ‘ignition’ signature in prefrontal and parietal regions, with content-specific information broadcast from posterior to anterior cortex. The results were less kind. Ignition following stimulus onset was observed, but no corresponding signal appeared at stimulus offset, when, according to GNW, the workspace should also fire. More damaging, the prefrontal cortex showed minimal representation of the identity and orientation of the consciously perceived stimulus. The content of consciousness, according to the COGITATE data, was not in the place that GNW said it would be.

Three independent judges (scientists who had not been involved in designing the predictions) concluded that IIT had fared ‘slightly better’ than GNW. Neither theory was fully supported. Neither was fully refuted. The result was, in the most literal sense, underdetermined: the data narrowed the field without closing it.

Two months before COGITATE published, the most public moment in the adversarial collaboration’s history had already occurred, not in a laboratory but on a stage. On 23 June 2023, at the 26th annual meeting of the Association for the Scientific Study of Consciousness in New York City, Christof Koch conceded a bet.

The bet had been made in 1998, in a smoky bar in Bremen, Germany, after a conference. Koch wagered Chalmers that within twenty-five years a clear neural correlate of consciousness, a specific, agreed-upon mechanism, would be identified. The stakes were a case of fine wine. By 2023, no such mechanism had been identified. Koch walked onto the stage in New York carrying a case of Portuguese wine, including a bottle of 1978 Madeira, and presented it to Chalmers.

‘I’ve lost the battle,’ Koch told the audience, ‘but won the war for science.’

They doubled down immediately: a new bet extending to 2048, when Koch will be ninety-one and Chalmers eighty-two. Chalmers’ response carried the dry precision of a man who has been right before: ‘I hope I lose, but I suspect I’ll win.’

The exchange was theatrical, but the substance was not. The fact that twenty-five years of sustained, well-funded research had not produced a consensus answer to the NCC question was itself a datum. It did not mean that the question was unanswerable. It meant that the answer was harder than the optimists of 1998 had expected — harder, perhaps, than any single theory currently on the table could provide.

Three months after Koch’s concession in New York, the theoretical peace collapsed. On 15 September 2023, 124 researchers posted an open letter on PsyArXiv accusing IIT of being ‘pseudoscience’. The signatories included Hakwan Lau, Joseph LeDoux, Bernard Baars, Patricia Churchland, Keith Frankish, and Daniel Dennett. Their primary target was IIT’s panpsychist commitments: the claim that consciousness is a fundamental feature of matter, present in protons and thermostats and photodiodes, seemed to the letter’s authors to place the theory beyond the reach of scientific refutation. ‘As researchers, we have a duty to protect the public from scientific misinformation,’ they wrote.

The letter was a grenade thrown into a field that had been working, however imperfectly, toward structured disagreement. Anil Seth, who had not signed, called the label ‘inflammatory’. The Nature editorial board stated that ‘such language has no place’ in collaborative science. Tononi’s group responded that the letter ‘had much fervor and little fact’. Koch pointed out that panpsychism, whatever its empirical status, is a position with a serious philosophical pedigree stretching from Spinoza through Whitehead to Chalmers himself.

The controversy exposed a fault line deeper than any experimental result. IIT’s critics were not merely disputing a prediction; they were challenging the theory’s right to be called scientific at all. The panpsychism question was the pressure point. If consciousness is truly a fundamental property of integrated information, then it pervades nature — a conclusion that strikes many neuroscientists as unfalsifiable mysticism and strikes IIT’s defenders as a necessary consequence of taking the Hard Problem seriously.

The open letter also revealed the limits of adversarial collaboration. ARC was designed to settle disputes between theories that agreed to play by empirical rules. But the deepest disagreements in consciousness science are not about which brain region lights up during which task. They are about what kind of question consciousness is: a functional problem to be solved by neuroscience, a structural property to be formalised by mathematics, or a philosophical puzzle that no amount of data will dissolve.

The deepest disagreements in consciousness science

are not about which brain region lights up during which task.

Where does this leave the science of consciousness in 2026?

The COGITATE results have done what adversarial collaboration is supposed to do: they have made the field’s ignorance specific. Before COGITATE, one could gesture vaguely at the prefrontal cortex or the posterior cortex and claim support for one’s preferred theory. After COGITATE, the gestures must answer to data. The prefrontal cortex did not represent conscious content in the way GNW predicted. The posterior cortex did not show the connectivity signatures that IIT predicted. Both theories survived the collaboration, but both emerged diminished.

ARC’s second adversarial collaboration, INTREPID, is testing IIT against Predictive Processing and Cyriel Pennartz’s neurorepresentationalism, using paradigms ranging from optogenetics in mice to visual scotoma experiments in humans. Its results, presented in preliminary form at a September 2024 symposium in Glasgow, are expected to refine the picture further.

Meanwhile, a question has arrived that ARC’s original design did not anticipate: consciousness in machines. The rapid development of large language models since 2022 has made the question practical. A system that processes information, represents its own states, and generates outputs humans find meaningful presents the existing theories with an object they were not built to classify. IIT’s mathematics could in principle assign a phi value to any substrate, but computing phi for a biological brain is already intractable. GNW’s ignition signatures were defined in cortical tissue. HOT’s metacognitive monitoring was theorised for organisms that evolved it. The theories were built to explain brains, and whether they explain anything else is not a question the adversarial collaborations were designed to answer.

Koch, in a Scientific American interview before the COGITATE results were published, cited the nineteenth-century philosopher Auguste Comte’s infamous claim that humanity would never know the chemical composition of the stars. ‘Comte declared that we could never know what the stars are made of. But shortly after he said this, spectroscopy was born, revealing their chemical composition.’ Koch remains convinced that consciousness will yield to science, and that the adversarial collaboration model, for all its imperfections, its tilted playing fields, its grenades lobbed from the sidelines, is the best mechanism the field has found for converting conviction into evidence.

Chalmers, on the other side of the bet, is more patient. The Hard Problem has not dissolved. It has not even softened. Twenty-five years from now, Koch will be ninety-one, and the question he and Crick set out to answer in a Friday-afternoon office at Salk will either have yielded or it will not. The arc from 1990 to 2048 is long enough to hold two full scientific careers — and may still not be long enough to hold the answer.

Interviews with Anil Seth, Cyriel Pennartz, Hakwan Lau, David Rosenthal, Dawid Potgieter, and Liu Ling were conducted for the original Chinese-language feature in Scientific American China, March 2021 print issue. The English revision draws on Crick and Koch’s 1990 programme and Koch’s account in The Quest for Consciousness (2004); Chalmers’ formulation of the Hard Problem (1995); Tononi and Koch on IIT in Phil. Trans. R. Soc. B (2015); Mashour, Roelfsema, Changeux, and Dehaene on GNW in Neuron (2020); Brown, Lau, and LeDoux on HOT in Trends Cogn. Sci. (2019); Casali et al. on PCI in Sci. Transl. Med. (2013); the COGITATE results in Nature (2025); Nature’s report on the Koch–Chalmers bet (2023); and the open letter on IIT (2023). Aaronson’s critique of IIT appeared on his blog (2014); Tononi’s response in BMC Neuroscience (2014). The ARC initiative is funded by the Templeton World Charity Foundation.